Databricks数据洞察Notebook演示

更新时间:

本文中含有需要您注意的重要提示信息,忽略该信息可能对您的业务造成影响,请务必仔细阅读。

本文针对Databricks数据洞察Notebook基本使用的一个示例。

前提条件

通过主账号登录阿里云 Databricks控制台。

已创建集群,具体请参见创建集群。

已使用OSS管理控制台创建非系统目录存储空间,详情请参见创建存储空间。

警告首次使用DDI产品创建的Bucket为系统目录Bucket,不建议存放数据,您需要再创建一个Bucket来读写数据。

说明DDI支持免密访问OSS路径,结构为:oss://BucketName/Object

BucketName为您的存储空间名称;

Object为上传到OSS上的文件的访问路径。

例:读取在存储空间名称为databricks-demo-hangzhou文件路径为demo/The_Sorrows_of_Young_Werther.txt的文件

// 从oss地址读取文本文档 val text = sc.textFile("oss://databricks-demo-hangzhou/demo/The_Sorrows_of_Young_Werther.txt")

步骤一:创建集群并通过knox账号访问Notebook

创建集群参考:https://help.aliyun.com/document_detail/167621.html,需注意要设置RAM子账号及保存好knox密码,登录WebUI时候需要用到。

步骤二:创建Notebook并做可视化调试

示例Note下载:CASE1-DataInsight-Notebook-Demo.zpln

示例文本下载:The_Sorrows_of_Young_Werther.txt

示例python lib下载:matplotlib-3.2.1-cp37-cp37m-manylinux1_x86_64.whl

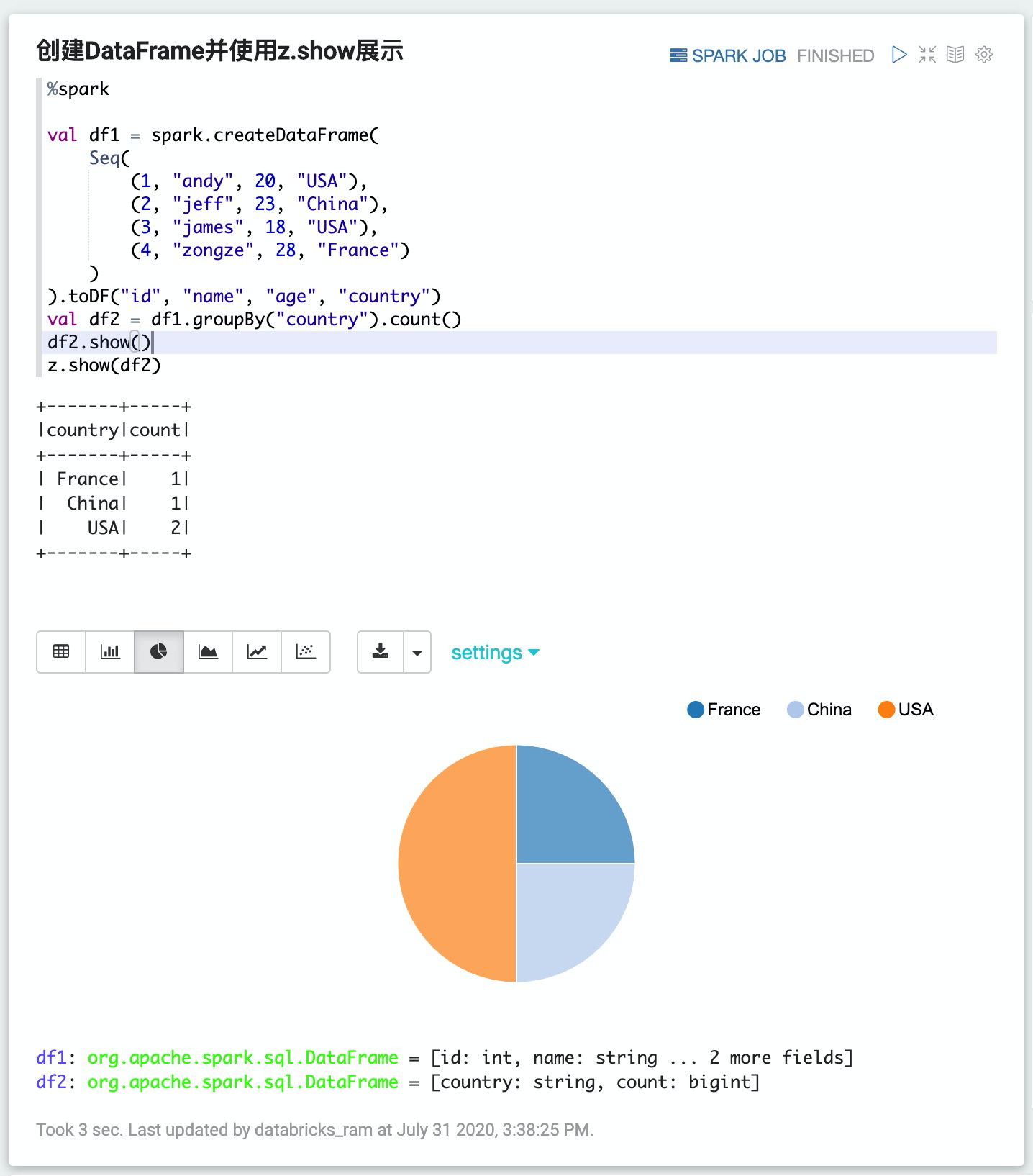

1. 创建DataFrame并使用z.show展示

%spark

val df1 = spark.createDataFrame(

Seq(

(1, "andy", 20, "USA"),

(2, "jeff", 23, "China"),

(3, "james", 18, "USA"),

(4, "zongze", 28, "France")

)

).toDF("id", "name", "age", "country")

val df2 = df1.groupBy("country").count()

df2.show()

z.show(df2)

2. 创建DataFrame并通过%spark.sql做可视化查询

%spark

val df1 = spark.createDataFrame(Seq((1, "andy", 20, "USA"), (2, "jeff", 23, "China"), (3, "james", 18, "USA"), (4, "zongze", 28, "France"))).toDF("id", "name", "age", "country")

// register this DataFrame first before querying it via %spark.sql

df1.createOrReplaceTempView("people")

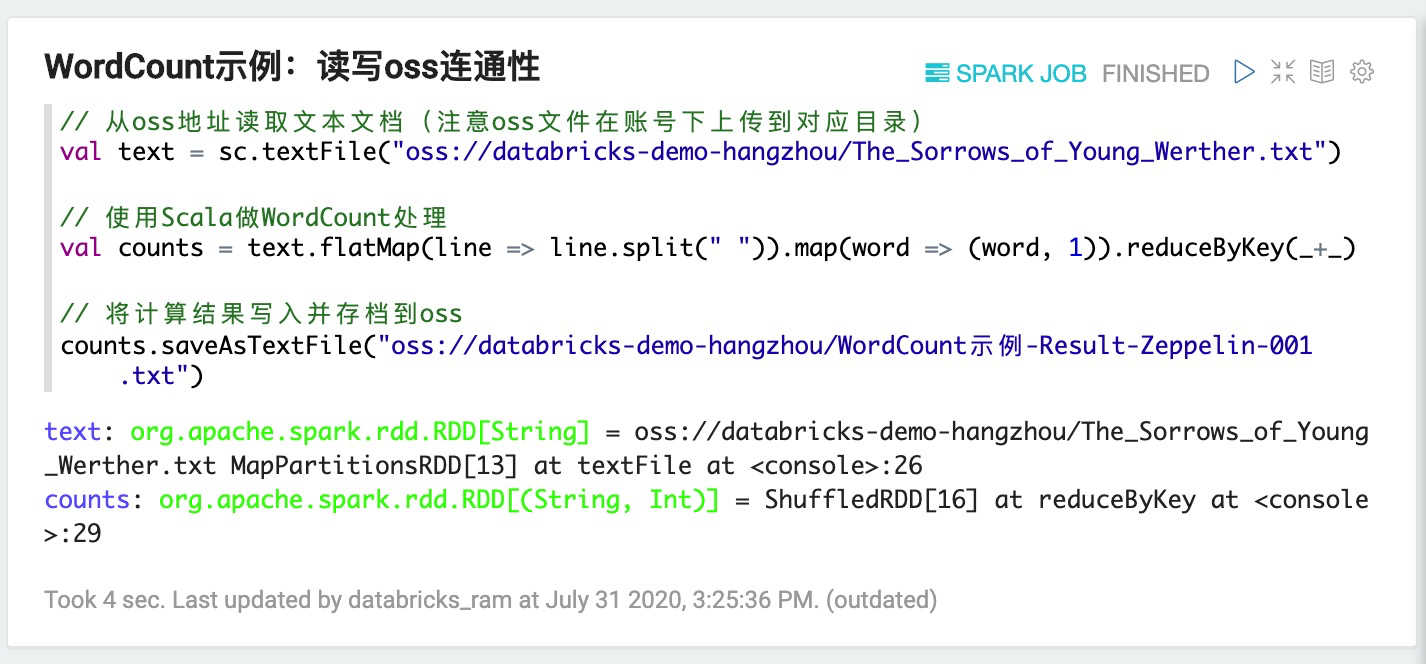

3. 测试OSS联通性,基本的WordCount示例

// 从oss地址读取文本文档(注意oss文件在账号下上传到对应目录)

val text = sc.textFile("oss://databricks-demo-hangzhou/The_Sorrows_of_Young_Werther.txt")

// 使用Scala做WordCount处理

val counts = text.flatMap(line => line.split(" ")).map(word => (word, 1)).reduceByKey(_+_)

// 将计算结果写入并存档到oss

counts.saveAsTextFile("oss://databricks-demo-hangzhou/WordCount示例-Result-Zeppelin-001.txt")

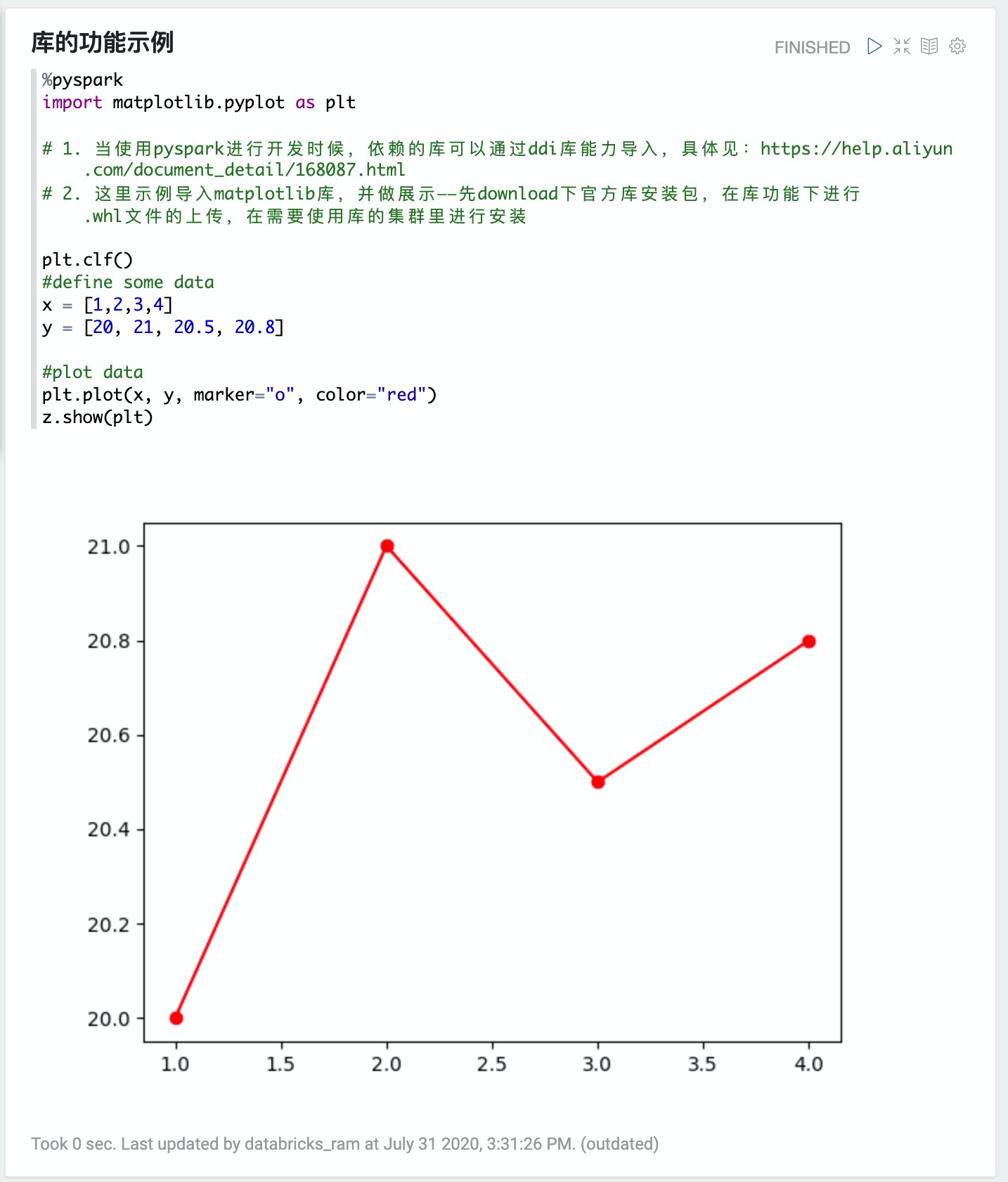

4. 库的功能示例

%pyspark

import matplotlib.pyplot as plt

# 1. 当使用pyspark进行开发时候,依赖的库可以通过ddi库能力导入,具体见:https://help.aliyun.com/document_detail/168087.html

# 2. 这里示例导入matplotlib库,并做展示——先download下官方库安装包,在库功能下进行.whl文件的上传,在需要使用库的集群里进行安装

plt.clf()

#define some data

x = [1,2,3,4]

y = [20, 21, 20.5, 20.8]

#plot data

plt.plot(x, y, marker="o", color="red")

z.show(plt)

反馈

- 本页导读 (0)

文档反馈